Autonomy Team

03/2026

Imagine a modern factory environment: sparks fly during welding, robot arms work with high precision, and conveyor systems move components through production in a steady rhythm. The processes look well-orchestrated and efficient.

But it is precisely in these highly optimized workflows that the structural limits of traditional robotics become apparent. As soon as conditions change, variants increase, or components deviate even slightly from their target position, rigid programming logic reaches its breaking point. Even small deviations can require manual intervention - or, in the worst case, lead to production downtime.

This is where a new era of technology begins: Physical AI. It combines digital intelligence with physical interaction and enables machines not only to detect their surroundings, but to truly understand them.

Physical AI is the most important technology within a five-stage development program that RobCo has established for building autonomous robots. At the end of this five-level scale is a robot you can talk to, show a task, and then have it autonomously perform multiple activities. The journey there is driven by an increased use of sensor-based learning methods that allow systems to gain experience and continuously improve.

These methods resemble the ones that have revolutionized text, image, and video processing through large language models in recent years. They complement what is already proven and widely used: flow-based robot motion, optimized path planning, and computer vision. The higher we move on the autonomy scale, the less the robot executes rigid, manually created programs - and the more it perceives its environment via sensors and runs learned models to perform tasks more flexibly and intelligently. For companies in industry or logistics, this means that AI robots can already take on tasks today that were previously considered “not automatable.” Through learning methods and massive data, these systems develop capabilities that go far beyond simply executing lines of code.

Physical AI enables machines to identify situations, evaluate them, and independently make well-founded decisions. A decisive step on the road to autonomous manufacturing.

RobCo’s modular hardware architecture grows flexibly with your needs. Systems can be expanded, adapted, and scaled across sites.

With reduced integration hurdles and the Robotics-as-a-Service model, automation becomes predictable and economical. Companies reach measurable ROI more quickly.

Physical AI is the technology that enables robots to perceive and interpret their real-world environment and respond independently based on that understanding. It is the tool on the path toward greater autonomy in industry.

Unlike purely software-based AI, progress happens in real industrial use. Systems gain experience directly on the factory floor. Learning becomes the core mechanism for continuous improvement.

By imitating human motion patterns and systematically adapting to local conditions (reinforcement learning), machines evolve step by step and increase their performance over time.

Modular systems reduce both integration effort and investment risk, making learning-capable robotics economically accessible for industrial companies of any size.

To grasp the concept behind Physical AI, a clear definition is helpful: while classical artificial intelligence primarily processes information in digital data (such as text or images), Physical AI brings that intelligence into the material world. The idea is that a machine learns how to move and act in an unstructured environment without having every millimeter programmed in advance.

Physical AI is often confused with large language models (LLMs), but the differences are fundamental. While LLMs are trained to find patterns and logical relationships in language (i.e., they are purely software-based AI), Physical AI must understand physical laws such as gravity, friction, and torque.

Intelligence does not emerge in a vacuum. It needs feedback. RobCo follows an approach similar to human learning: Physical AI replaces theoretical assumptions with concrete experience. Every action generates new data from which the system learns and continuously improves its performance. Only through this physical connection to the world does computation become real learning. Without a body, there would be no immediate feedback on whether a movement succeeded - or failed.

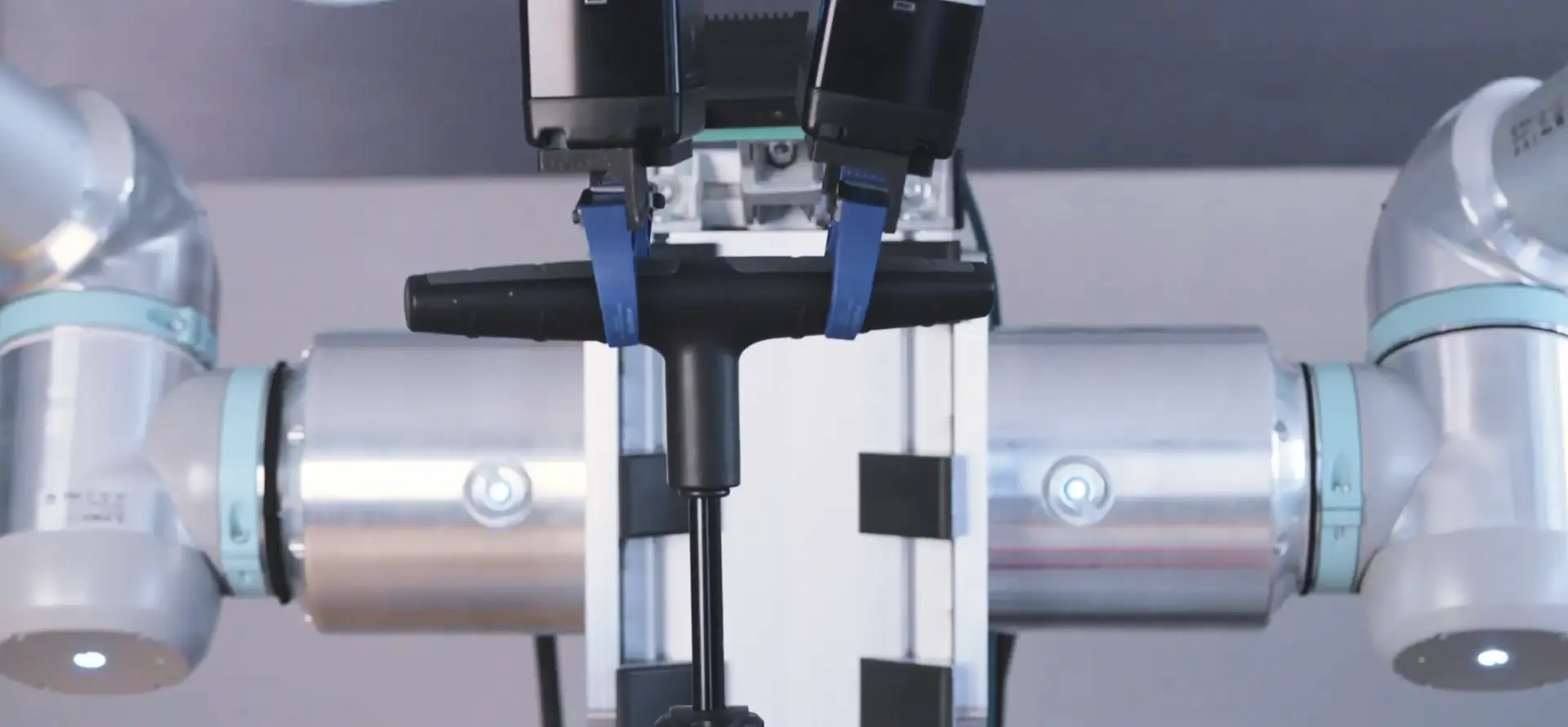

For a robot to act autonomously, it needs senses. This is where technologies such as high-resolution camera systems, motor encoders, tactile sensors, and other monitoring sensors come into play. These sensors allow the system to perceive its environment with precision. Actuation -motors and joints - then turns these insights into precise motion. RobCo uses a modular design in which the hardware serves as a flexible body for the AI stack. Signals from individual modules are automatically merged into one overall system. The autonomy system always knows which embodiment is being used - regardless of how the robot is assembled.

Autonomy has arrived in logistics and manufacturing and is used daily in industrial environments by hundreds of robots already in operation.

RobCo has defined a five-level autonomy scale to make autonomy in factory environments tangible: a pragmatic development plan from programmed motion to an autonomous manufacturing platform.

The five levels describe how systems progressively move toward this goal - from rule-based planning and perception-based systems to increasingly AI-driven decision-making in Levels 3 and 4.

Physical AI is not the same as autonomy, but it is a key method for reaching Level 5. It builds on learning from real interaction and enables the transition from assisted intelligence to true autonomy.

Classical methods such as path planning or computer vision are important building blocks. However, they are not Physical AI by themselves; they are components of earlier autonomy levels.

These levels shift autonomy from scripted workflows to data-driven competence. RobCo is currently developing and testing these capabilities in the field. Level 5 is the development goal for the coming years.

Today, we are in a phase where we deliver “intelligent planning” and “perception” (Levels 1 and 2) at industrial scale.

What we do not yet see is fully free autonomy in any environment (Level 5). Development is incremental. We are currently working intensively on Level 3 (“embedded autonomy”) and Level 4 (“narrow autonomy”), where robots learn tightly limited tasks through demonstration. The next goal is to bring these learning capabilities directly into customer environments.

Where machines make decisions independently, the question of human safety arises. Physical AI does not rely on “black-box” decisions, but on controlled safety architectures. Ethically, the greatest value is relieving personnel by letting Physical AI handle dangerous or monotonous tasks. The purpose is to "Automate the ordinary, so humans can do the extraordinary".

RobCo’s autonomous manufacturing platform integrates all technological layers - mechanics, electronics, software, and AI - into a continuous autonomous system. This enables adaptive and cost-efficient automation solutions for industrial manufacturing. This approach differs fundamentally from attempts to immediately build complex humanoid robots, which are often expensive and fragile. Instead, we rely on a synthesis of proven industrial hardware and a state-of-the-art AI-first software stack.

Autonomous robotics starts where classical automation hits structural limits. Physical AI is the core component. Experience from recent years shows that isolated software solutions are not enough to robustly and flexibly control real production processes. Only by integrating AI into physical systems do we achieve the adaptability required in times of skilled-labor shortages and rising competitive pressure.

In the coming years, the development of Physical AI will accelerate rapidly. Machines will learn through demonstration, refine tasks autonomously, and operate in increasingly complex, semi-structured environments. With modular hardware and affordable entry points, industrial companies can benefit from the advantages of Physical AI today.

Are you ready to take your manufacturing to the next level with Physical AI? Talk to our experts now.

Physical AI describes the ability of robots to sense, understand, and act in the physical world with growing autonomy. It is a practical evolution of industrial robotics in which intelligence is embedded into modular systems - so machines learn independently from data in their real environment.

Physical AI is a technological approach that brings intelligence into physical systems, while humanoid robots are simply a particular form factor. Like humanoid robots, RobCo uses Physical AI to combine modular industrial robots with AI software into an autonomous industrial robotics platform. With our five-level autonomy scale, you can benefit from the latest developments today - even before Level 5 is reached.

Yes. AI-based systems can handle unstructured environments where classical automation reaches its limits. By combining robust industrial hardware with learning-capable software, a flexible and economical form of automation emerges - one that continuously improves over time during operation.